Dazza Greenwood has been building agents for longer than several legal tech founders have been alive.

In the 1980s, as an undergraduate computer science student, he encountered AI assistants for the first time. His module introduced a then-new paradigm: human language, chat-based systems. The exercise was to build something modelled on ELIZA, the MIT chatbot whose therapist module ran on a simple heuristic. Find the keyword. Reflect it back to the user. When in doubt, tie it all back to the user’s parents. It was deterministic, a little absurd, and wildly popular with the people who tried it. Dazza learned some tricks and came away with a fascination with what AI was, and more importantly, what it could be.

Note: Some of the concepts in this episode may be unfamiliar to some listeners. We cover them in the technical explainer at the end.

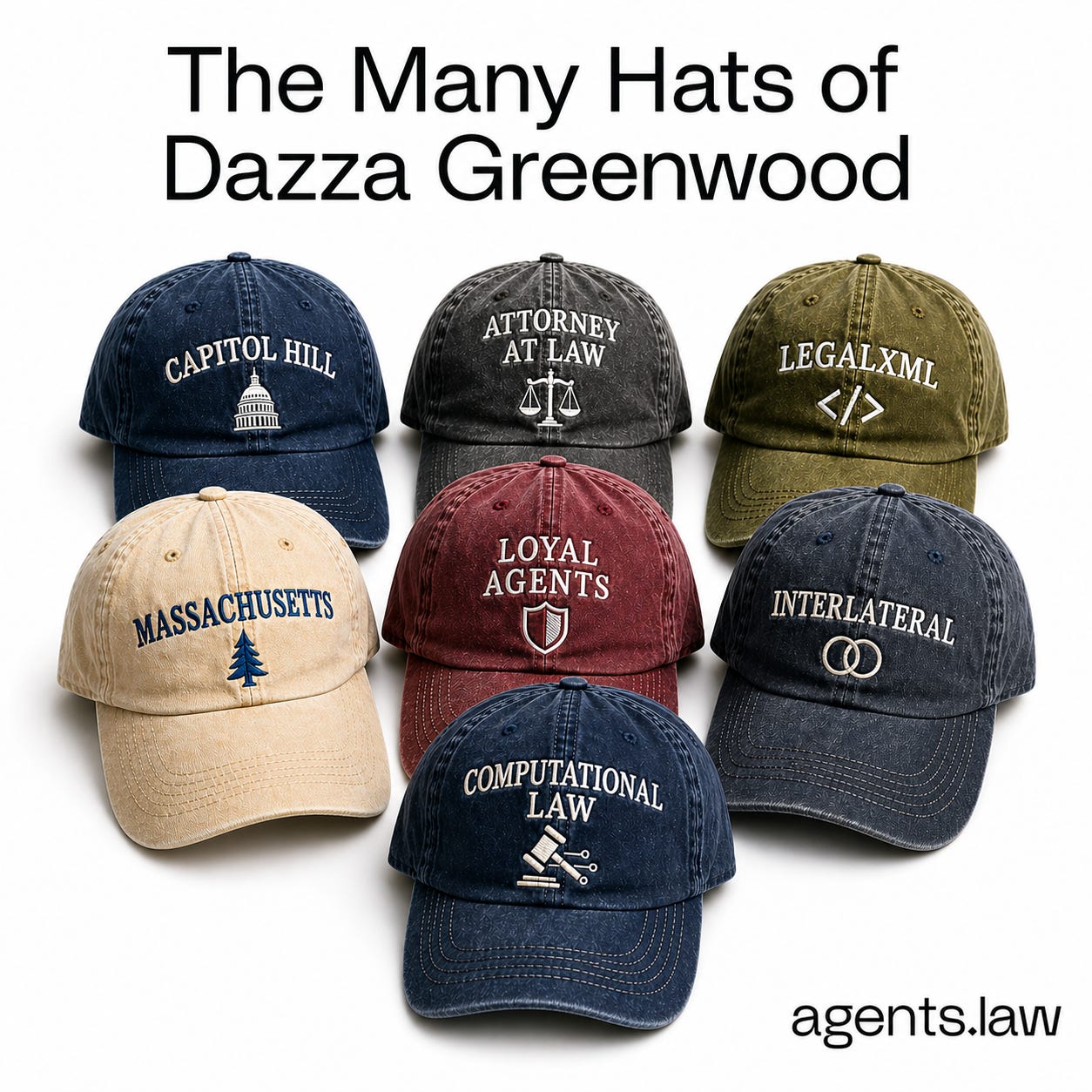

What followed was a career which has spanned dozens of initiatives around the world. Let’s just say that Dazza has worn (and continues to wear) a lot of hats. Legislative aide. Candidate for office. In-house Technology Counsel to the Commonwealth of Massachusetts (which by the way he notes would be a Fortune 50 company if it were private). Standards architect. Stanford researcher. Platform builder.

He went to law school, he tells me, because he kept getting different answers from different lawyers to the same question and found it unacceptable. After years of practice, he still does not have a fully satisfactory answer to “what is the law?” but he at least knows how to find the relevant law himself, which was enough to let him return to technology without feeling incomplete.

He was doing legal tech, he says, before anyone called it that. Writing scripts to automate his work. Treating legal documents as data - to the extreme displeasure of colleagues who just wanted Microsoft Word (why is it always Word?)

Why didn’t the standard stick?

In the early 2000s, Dazza was one of the architects of LegalXML, an effort to create an international standard for marking up legal documents so they could be treated as structured data. He ran the e-contracts group. It took seven years to reach the status of a recognised international standard. It attracted a small community of who he describes as lawyer-geeks who marked up their contracts in XML, built clause libraries, and imagined a future of genuinely interoperable electronic contracting.

It did not really arrive, at least not in the way the group expected. A handful of vendors adopted the standard, mostly for workflows they were already running. The broader transformation never came.

The lesson Dazza draws from it is that the obstacle was never the standard. It was the culture. The love affair with Microsoft Word was not something a well-designed schema could fix. Standards, he says, have to arrive at the right moment. Too early and the industry is not ready. Too late and people have already locked into whatever is there.

The good news is, the moment has now arrived, and it came from a different direction entirely. Large language models can peer into the meaning of a legal instrument and address it as data without anyone having to tag a single element. Lawyers, it turns out, are naturally good at the lingua franca of LLMs: precise language, conditional logic, sub-clauses, if-but-not-that constructions. The standard that nobody could agree on turned out to be language itself.

Is your agent loyal to you?

Dazza has just finished a research sprint at Stanford’s Digital Economy Lab on a project called Loyal Agents, run jointly with the Consumer Reports Innovation Lab. The question it asks is simple and the implications are not: when an AI agent conducts transactions on your behalf, what legal framework governs whether it is actually acting in your interest?

His answer keeps returning to fiduciary duty, and specifically to a US federal case called Kovel that almost nobody in legal AI is talking about. Kovel itself is sixty years old. It involved an accountant working with a tax law firm whose communications with a client became the subject of a grand jury subpoena. The Second Circuit held that the privilege extended to the accountant because he was acting as the lawyer’s agent in providing legal advice. The principle that emerges, Dazza argues, applies directly to modern AI vendors. To protect attorney-client privilege when a SaaS provider is handling client communications on behalf of a lawyer, that provider needs to be the lawyer’s agent in the legal sense. Most enterprise AI contracts disclaim exactly that. Dazza has read them all. He has built a public breakdown of what each of the major frontier model providers actually says in their enterprise terms about confidentiality and agency. See Links below.

The most upvoted question at Anthropic’s recent legal webinar, which drew over 20,000 registrations, was about how to handle privilege when using general purpose AI tools. Dazza thinks the profession is looking for the answer in the wrong places. The technical controls matter. Zero data retention matters. But the legal layer, the contract clause that says we are your agent, is what Kovel’s logic requires, and most providers do not offer it.

His pitch to the frontier model providers is direct: stop disclaiming agency and start defining it. Write a narrow, limited agency clause. Be your client’s agent for three specific things and disclaim it for everything else. It contains risk rather than expanding it, it supports privilege, and for providers building agentic products, it simply reflects reality. If a human did what these systems do, it would be an agent. In Dazza’s opinion, the contract should say so.

The platform nobody has built yet

Six months ago, Dazza started building something called Interlateral. The felt need behind it is something he has observed sitting in meetings in San Francisco with startup and innovation teams where everyone has agents running quietly in the background, and then communicating with each other through the narrow human-to-human channels of Slack and email, as if the agents are not there.

Interlateral is a shared collaboration space where humans and their agents can work together in the same room. You bring your agents. He brings his. There is a third space where they can interact, collaborate, and co-work, with a shared markdown surface that both humans and agents can read and write into. The design principle is human-centred: a person is always at the wheel. The agents are extended cognition, not autonomous actors.

The first event ran at Stanford last week with 60 lawyers and eight teams, and the next is at MIT. Eventually, Dazza wants tens of thousands of participants. He thinks the combination of people and agents in a shared space is a genuinely new source of collective intelligence, and that we have barely started to understand what it can produce.

There is a harder problem underneath it. In Google Docs or Slack, identity is straightforward. You can see who wrote what. In a space where agents are acting on behalf of humans, you now have two levels of separation from the person you think you are dealing with. Agent identity and attribution, knowing whose agent it is and holding humans accountable for what their agents do, is a bleeding-edge question the industry has not yet solved.

How he builds with agents

At the end of our conversation, Dazza pulls up his GitHub repo and walks me through how he actually works. The interlateral_agents repo is open source, the product of years of slow tuning, and it is more architecturally interesting than most people’s agent setups.

He runs three models in parallel: Claude Code, Codex, and Gemini, with Grok CLI from xAI expected to join shortly. What makes it unusual is the communications layer. The agents share what he calls a multiplexing comms hub, a setup that lets them read each other’s outputs and write into each other’s terminals directly. He describes it as a Vulcan mind meld. One agent can see that another tried something, that it failed, and suggest an alternative approach. The collaboration is explicit and adaptive rather than just running tasks in sequence.

On top of that he uses skills: lightweight prompt-level definitions that tell the agents how to organise their work on a given task. He can arrange them hierarchically, with one agent orchestrating the others, or as peers collaborating on the same problem. The skills determine the shape of the collaboration without requiring complex infrastructure to enforce it. It is, he says, a surprisingly low-key way to get a lot out of very capable but very different models working together.

What is the AI-native organisation?

I ask Dazza what he thinks people are underestimating.

The current pattern, he says, is that AI creates extraordinary efficiency at discrete points in a workflow and then causes congestion at the parts downstream that have not changed. Contract review is faster. The humans waiting for the output of contract review are not. The clog forms between the transformed part and the untransformed part, and it is going to get worse before anyone fixes it.

The AI-native organisation is one that has redesigned itself around AI, touching pricing models, staffing, role definitions, and quality control, which starts to look less like a periodic event and more like continuous monitoring. That redesign, he says, is not premature. It is coming whether organisations are ready for it or not. The ones doing the mapping exercise now, looking holistically at the full lifecycle of a matter rather than optimising individual tasks, are the ones who will navigate it gracefully. The ones waiting are storing up a serious problem.

Final note

Dazza Greenwood is genuinely hard to keep track of. In preparing for this conversation I found Stanford research, open source repositories, consulting work, a platform under active development. Dazza literally switched hats midway through our discussion.

What surprises me most though is that none of it feels scattered. The dots (and hats) are actually very connected. It all connects back to the same conviction he has held since the start: that law and technology are not separate domains, that legal instruments are data, that agents will conduct transactions on behalf of humans and the frameworks governing that need to be built carefully. He has been pretty patient about this for forty years. The future, he says, has finally arrived. And he seems genuinely delighted about it.

Links

dazzagreenwood.com, Dazza’s blog including his upcoming open source model comparison table

computationallaw.org, Dazza’s write-up of the Stanford Interlateral event

interlateral.com, sign up to attend a future event

civics.com to understand more about Dazza’s consulting work

loyalagents.org, the Stanford and Consumer Reports research and vendor contract analysis, including the Kovel breakdown

github.com/dazzaji/interlateral_agents, the open source agent library Dazza uses to build with Claude Code, Codex, and Gemini

The Technical Stuff

Here’s a quick primer on some concepts that may be unfamiliar to some:

Evals

Short for evaluations. A structured way of testing whether an AI system is doing what you want it to do, consistently and measurably. Dazza uses an open source platform he built to run evals on agent behaviour, putting numbers on whether an agent is acting in a user’s interest or getting tripped up by a conflict it has not recognised. Think of it as quality control, but for AI decision-making.

Fiduciary duty

A legal obligation to act in someone else’s best interest rather than your own. It applies to lawyers, financial advisors, and other professionals. Dazza’s Loyal Agents research asks whether AI agents should be held to something similar, and whether the contracts governing AI services currently reflect that expectation. Most do not.

Kovel

A 1961 Second Circuit case that Dazza thinks is the missing piece of most privilege discussions in legal AI. United States v. Kovel involved an accountant employed by a tax law firm whose communications with a client became the subject of a grand jury subpoena. The court held that the attorney-client privilege extended to the accountant because he was acting as the lawyer’s agent in providing legal advice. The principle Dazza extracts: for privilege to hold when a SaaS provider handles client communications on a lawyer’s behalf, that provider needs to be the lawyer’s agent in the same legal sense. Most AI vendor contracts disclaim agency explicitly. Dazza argues this is both a legal risk and a fixable problem, and that providers who address it will have a commercial advantage in the legal market.

Multiplexing

A communications technique that allows multiple signals to share the same channel. In the context of Dazza’s agent setup, it means his agents can read each other’s outputs and write into each other’s terminals in real time, rather than operating in isolation. The result is agents that can observe what the others are doing, flag when something is not working, and adapt accordingly.

Skills

In the context of agent configuration, skills are lightweight prompt-level instructions that define how an agent should approach a task or how a group of agents should organise their work together. They are not code in the traditional sense. They sit closer to a well-written practice note. Anthropic has its own Skills framework, which we have covered separately on agents.law.

Loyal Agents

Both the name of the joint Stanford Digital Economy Lab and Consumer Reports Innovation Lab research project Dazza works on, and a broader concept: the idea that AI agents conducting transactions on behalf of users should be demonstrably aligned with those users’ interests, in a way that is measurable, contractually grounded, and legally enforceable. The research has produced evals for testing agent loyalty and a public analysis of how major AI providers currently handle the relevant contract terms.