Opus 4.5: the next step for legal agents?

Just as we were celebrating the capabilities of Gemini 3, a new benchmark landed

The model announcements keep coming.

Last week, the internet was evangelising the capabilities of Gemini 3. (TLDR: It’s very, very good. If you haven’t already, build something in Google AI Studio. I guarantee you’ll be amazed by what you can build.)

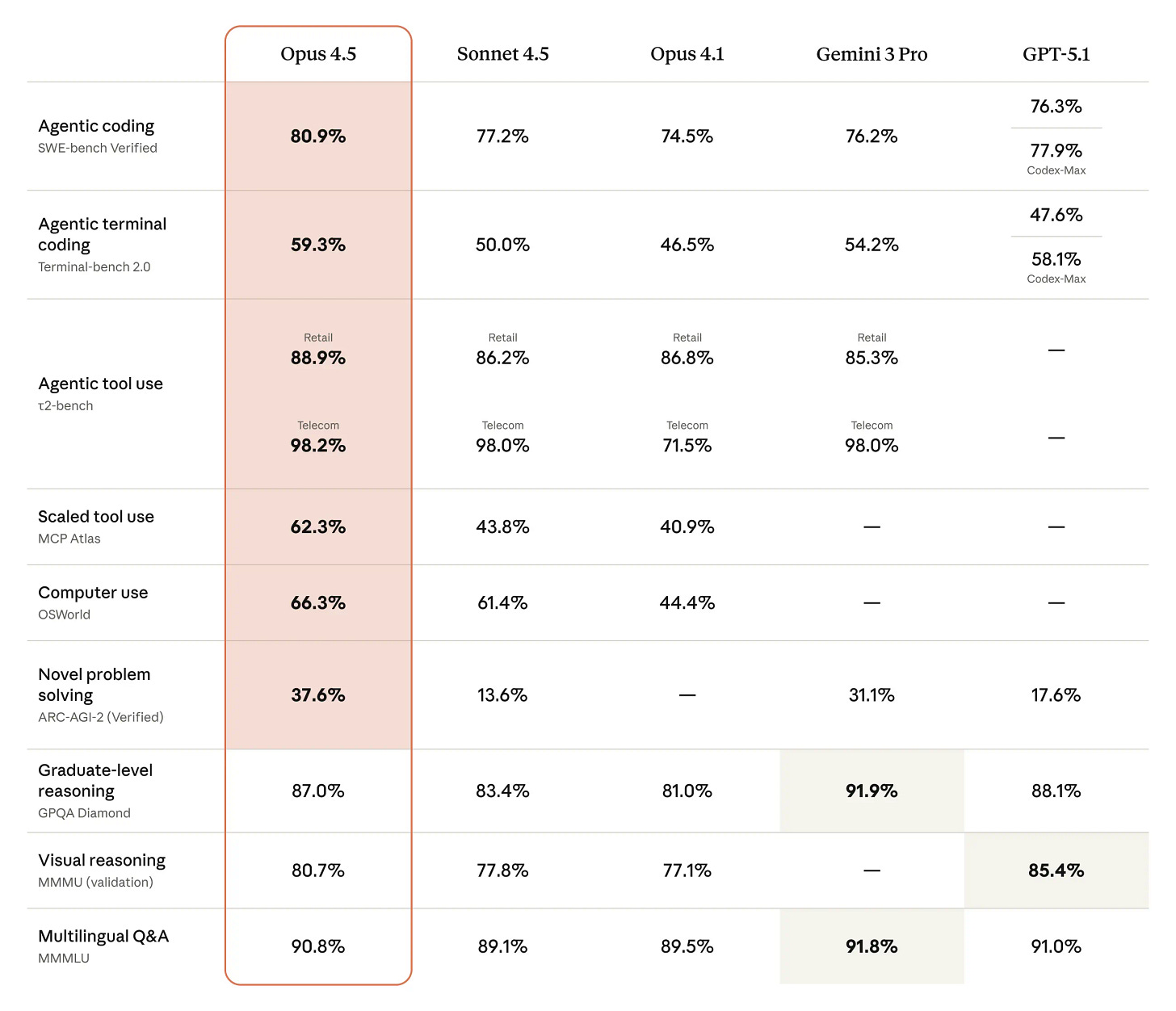

This week, we have Claude Opus 4.5, which promises major advancements in long-horizon task handling, tool use, memory and agentic behaviour.

Let’s look at each one of these claims, and what they may mean for people integrating agents as part of legal service delivery.

Long-horizon task handling

What this means:

The model is better at staying coherent across multi-step tasks without forgetting what happened earlier or veering off-track.

What it might enable in legal work:

Many legal workflows are sequences: intake → triage → draft → review → approval. If the model holds the thread for longer, agents become more reliable inside these sequences instead of stalling after the early steps.

Tool use

What this means:

The model is supposed to be more dependable when interacting with external tools - editing documents, pulling data from spreadsheets, routing information, executing predefined operations - without misinterpreting the tool output or failing mid-flow.

What it might enable in legal work:

Most legal processes span multiple systems. Contracts, schedules, emails, cap tables, templates etc. Improved tool use means an agent can move between these without constant human oversight. That’s essential for anything beyond surface-level automation.

Memory

What this means:

The model can store and retrieve key information from earlier in the workflow more accurately. Not just within one chat, but across longer spans of activity.

What it might enable in legal work:

Legal tasks often depend on state: who approved what, which version is current, which assumptions apply, what deadlines exist. If the model can track and retrieve this state reliably, agents can operate more broadly across a matter lifecycle instead of a single moment.

Agentic behaviour

What this means:

“Agentic” is often vague, but the meaningful version is this: the model is better at following procedures, making conditional decisions, and knowing when to act vs escalate vs stop.

What it might enable in legal work:

Agents for law need to exercise good judgement. This doesn’t mean they must be fully deterministic but, rather, the right blend of deterministic and probabilistic. If 4.5 improves procedural execution, more of the workflow can be delegated without the agent unraveling mid-process.

What this all means

The direction of travel for 2026 is becoming clearer. Firms and legal tech providers have a growing number of tools available to build agents that are genuinely useful, getting closer to the vision of “AI teammates”. 2026 is going to be the year of the “agent”. The next job is to figure out how best to integrate them into legal processes.