Law Is Not One Thing

Our industry is very broad and the impact of AI will not be evenly distributed

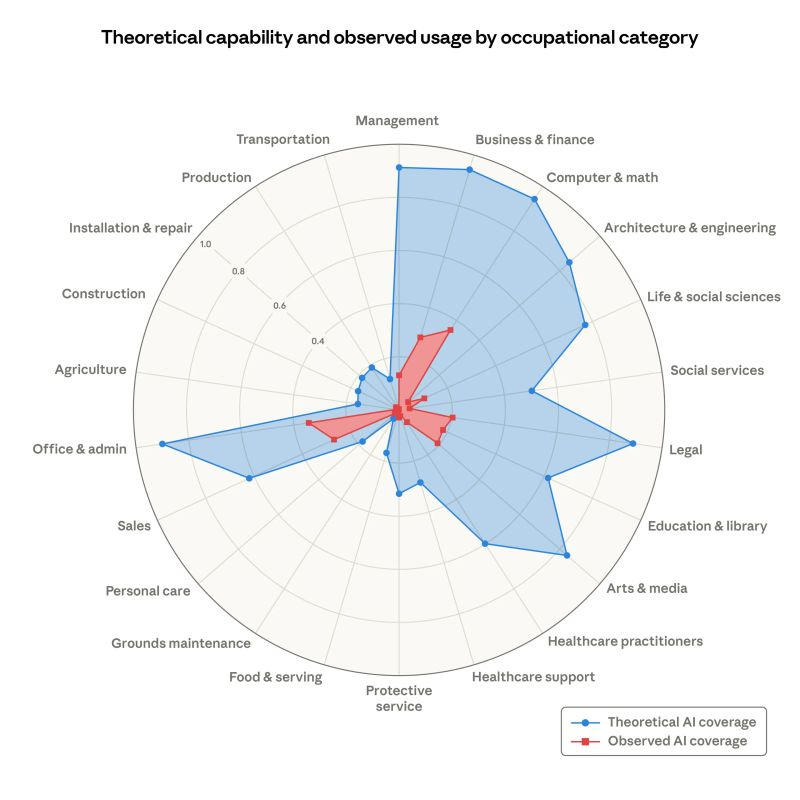

Remember that spider chart published by Anthropic recently - the one that shows the theoretical vs. actual coverage of AI across domains?

I love the chart and (depending on how you look at it) the gap between theoretical and actual is a generational opportunity.

But there is one problem with it, and it’s a nuance we keep missing in the narrative around AI in law: law is not one thing.

While this feels like I am stating the obvious, I see it time and again in the discourse. AI will transform law firms. AI will kill the billable hour. Law is resistant to change. Lawyers will be replaced. Or they won’t. All of these claims share the same flaw.

Let’s agree which part of “law” we are talking about

Law is a single profession. Lawyers go to law school and hold practising certificates and tend to work for firms with licences and insurance, or within in-house legal teams. Beyond that, they often don’t have as much in common as you might think.

Consider three lawyers:

One is running a cross-border M&A deal: coordinating due diligence across fourteen jurisdictions, reviewing dozens of contracts, engaging with the regulator, negotiating an SPA, advising on structure, managing a signing and closing process under time pressure.

Another is handling a probate matter: guiding a bereaved family through the administration of an estate, valuing assets, coordinating tax return filings, distributing funds, navigating family dynamics.

A third is a trial lawyer in the middle of a high-stakes bet the company dispute: cross-examining a hostile witness, making submissions to a judge, reading a courtroom in real time, deciding on the fly whether to press a line of questioning or abandon it.

Yes, these three people share a professional qualification. Yes, they share several of the same foundational skills and patterns of thinking. But the overlap in what they do each day is actually quite thin. The cognitive demands, the pace, the relationship with documents, the role of judgment, the industry or sector nuance - all sufficiently different that each lawyer absolutely could not step into the other’s shoes without a significant amount of retraining and unlearning.

And that’s before you get into the difference between working in an AmLaw 20 firm, a UK High Street firm, or the APAC legal department of a Fortune 500 company.

Talking about how “AI will transform law” is a bit like saying “AI will transform sport”. We need to agree which sport we are talking about. All involve people who participate in the sport, most tend to need certain baseline skills such as coordination, reactions, strength or speed, but the impact of AI on Formula 1 is, I would imagine, rather different to the impact of it on Taekwondo or Badminton or Curling. (Yes, we in the UK are still not over the defeat to Canada.)

Practice areas might not be that helpful either

In an effort to draw a distinction between the vast array of jobs that lawyers do, we tend to group things by Practice Area or Sector.

I’m a TMT Lawyer. You’re a Commercial Litigator. This is better than lumping everything under “Law” but I’d argue we should go further when we talk about AI because even within each of those areas, there is enormous variation. Practice areas are effectively bundles of tasks, and each of these tasks is radically different in terms of its exposure to AI.

The M&A lawyer’s week includes tasks that look like project management, due diligence and contract review, drafting, negotiation, pricing and budgeting, relationship management, and so on. And each of those tasks has a completely different relationship with AI.

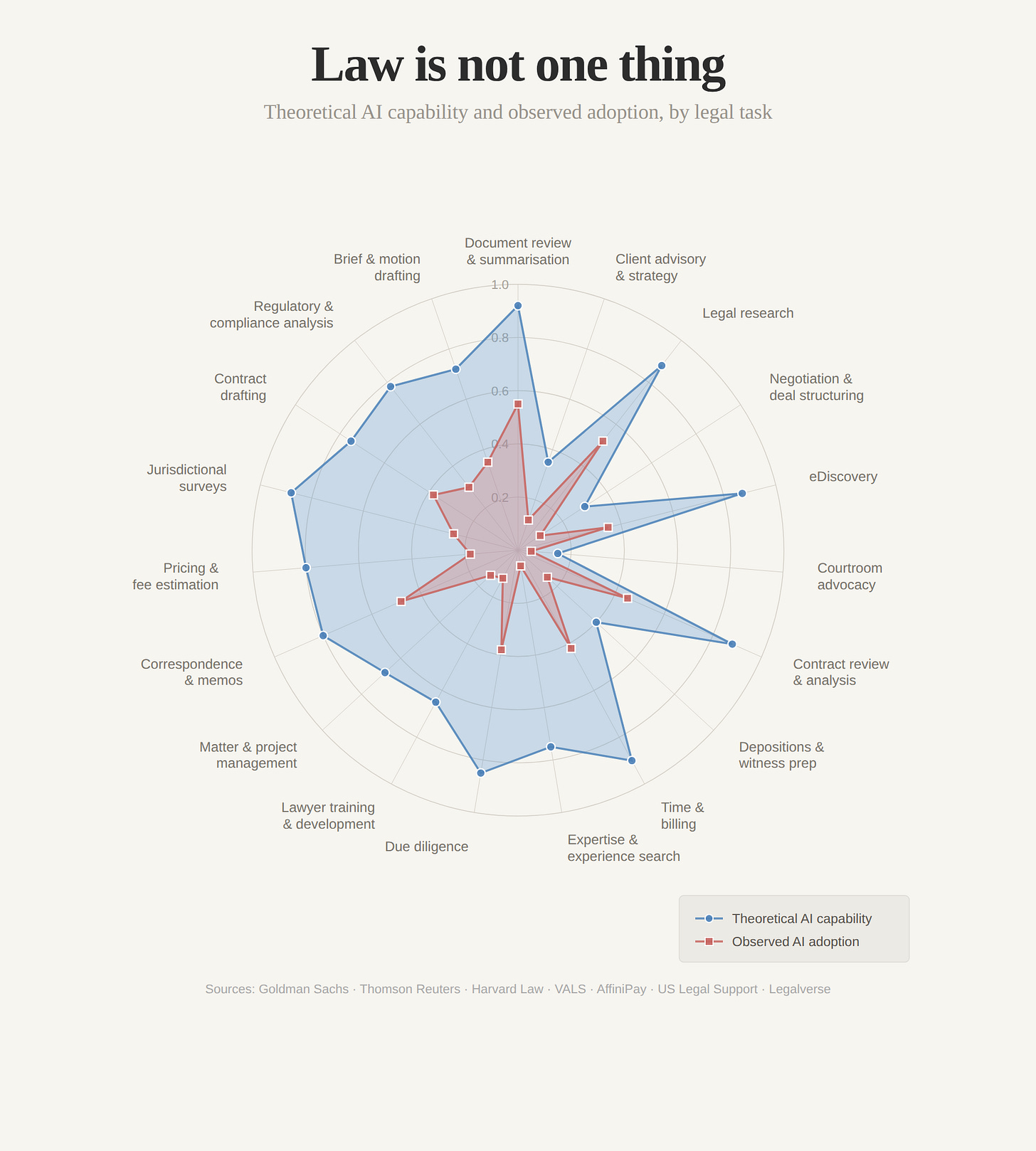

So I remade the chart

I was curious what it would look like if we remade the Anthropic spider chart but rather than look at impact by industry or practice area, we look at it by legal tasks. I came up with 20 legal tasks and applied the same two dimensions as the Anthropic chart: theoretical AI capability and observed adoption.

The numbers are wrong

These numbers are not the output of a controlled study. Like most things in AI right now, they are best guesses, informed by a limited amount of research gathered rather quickly from Goldman Sachs, Thomson Reuters, Harvard Law School’s Center on the Legal Profession, the VALS Legal AI Report, and several industry surveys from 2025 and early 2026. I should probably cross-check against Michael Kennedy’s excellent legal tech stats engine, which was published today. The precise values and even the variables on the chart are absolutely debatable and I would welcome that debate.

But my point is all about the shape of the chart. It’s that the unevenness, the spikes and collapses, would broadly hold even if every number shifted one way or another. It’s that law is not one thing.

The takeaway from this

My main objective here is just to bring a bit more nuance and clarity to the conversation about AI so we have better discussions.

By thinking in terms of tasks rather than departments or the entire industry, we can:

Make sure we’re actually discussing the same thing (i.e., apples to apples) rather than make broad predictions which are partly right and mostly wrong

Make better decisions about where AI can play a part and where it cannot or should not

Agree which sorts of tasks will lend themselves more to the billable hour, and which will get unbundled and productised

Identify use cases and learnings from using AI for a legal task that can be applied between departments and teams.

So, next time someone makes a sweeping statement about AI’s impact on law, ask them which legal task they are talking about.

Sources: Goldman Sachs (2023, 2025); Thomson Reuters GenAI in Professional Services Reports (2025, 2026); Harvard Law School Center on the Legal Profession; VALS Legal AI Report (2025); AffiniPay/MyCase Legal Industry Report (2025); US Legal Support (2025); Legalverse Media (2025).

Inspiring article, Matt. Breaking down the activities a legal practice performs really helps to understand two things: where the value is created and where AI is most likely to have the greatest impact. I wrote about the former here:

https://www.linkedin.com/pulse/maister-law-firms-have-more-than-one-business-model-craig-miller-eurpe

And I reached out to you, Matt, regarding my forthcoming article about the latter.

As someone that is knee deep in contract reviewing I can attest that AI is a very powerful tool in my day to day work. However I totally agree that in other legal areas I would not touch AI with a barge pole! Great article.